Register Yourself to get hired soon:- Register Now

Deloitte Is Hiring Company: Role: Salary: Location: Experience: Education: Deloitte Data Engineer 4.5LPA(Expected) Multiple Freshers BE, B-Tech, MS, MBA, ME, M-Tech

Deloitte Off-campus Drive 2023 Freshers:-

Job Description

Deloitte Is hiring fresh candidates. We are here to inform you about All off-campus Hiring. Deloitte off-campus drive 2023. We help you to find a better career. Please start your career as a fresher here at Deloitte. Deloitte is a reputed organization and you will get a better experience here by working as a fresh candidate. All managers at Deloitte are working friendly. if you feel stuck They will Manage everything and give you time to build yourself confidently, a hard worker as well as the smart worker. Apart from that if you have any query related to work or you are not able to do a particular task or you have any error that you can’t solve. All the seniors are very friendly with the fresh candidates at Deloitte and they will teach you “how to solve real-life problems”.

The detailed eligibility and application process are given in below.

Position Summary

- Develop solutions with an Agile Development team.

- Define, produce, test, review, and debug solutions.

- Create component-based features and micro-frontends.

- Database development with Postgres

- Create comprehensive unit test coverage in all layers.

- Deploy solutions to Docker containers and Kubernetes.

- Help build a team culture of autonomy and ownership.

- Work with a Product Owner to refine stories into functional use cases and identify the work effort as tasks.

- Participate in test case creation responsibilities and peer reviews prior to coding.

- Review implementation plans of peers prior to their coding.

- Demonstrate feature work at the end of each iteration.

- Work from home when desired with infrequent visits to the office and limited travel for planning sessions.

- Develop our ETL process to be a robust automated production quality solution and lead the implementation and delivery.

- Peer with the application engineering team to ensure our data model fits the need of the solution while promoting best practices in its design from both a maintenance and performance perspective.

- Peer with the data science team in understanding their needs for preparing large datasets for machine learning.

- Assist the team with understanding the execution plan of poorly written queries. Help remediate performance problems by assisting the performance tuning of queries and/or refining the data model to meet the needs of the business.

- Build data systems and pipelines.

- Evaluate business needs and objectives.

- Explore ways to enhance the Product/pipeline.

- Collaborate with Team

- Showcase the Skills / Innovative ideas to the Team biweekly.

- Strong knowledge of Python & SQL.

- Hands-on experience with SQL database design

- Hands-on experience or Knowledge about Airflow.

- Knowledge of Docker and Kubernetes

- Experience with running containerized microservices.

- Experience with Apache Spark or AWS EMR.

- Experience with cloud platforms (AWS, Azure) with strong preference towards AWS.

- Experience in Database design practices

- Technical expertise with Data warehouse or Data Lake

- Expertise in configuring and maintaining PostgreSQL.

- Experience performance tuning queries and data models to produce the best execution plan.

- Strong experience building data pipelines & ETL.

- Experience working on an Agile Development team and delivering features incrementally.

- Experience with Git repositories

- Working knowledge of setting up builds and deployments

- Experience with both Windows and Linux.

- Experience demonstrating work to peers and stakeholders for acceptance

- Ability to multi-task, be adaptable, and nimble within a team environment.

- Strong communication, interpersonal, analytical and problem-solving skills.

- Ability to communicate effectively with nontechnical stakeholders to define requirements.

- Ability to quickly understand new client data environments and document the business logic that composes them.

- Ability to integrate oneself into geographically dispersed teams and clients.

- A passion for high quality software. Previous experience as a data engineer or in a similar role

- Eagerness to learn and seek new frameworks, technologies, and languages

- Commitment to working with others and sharing knowledge on a regular basis

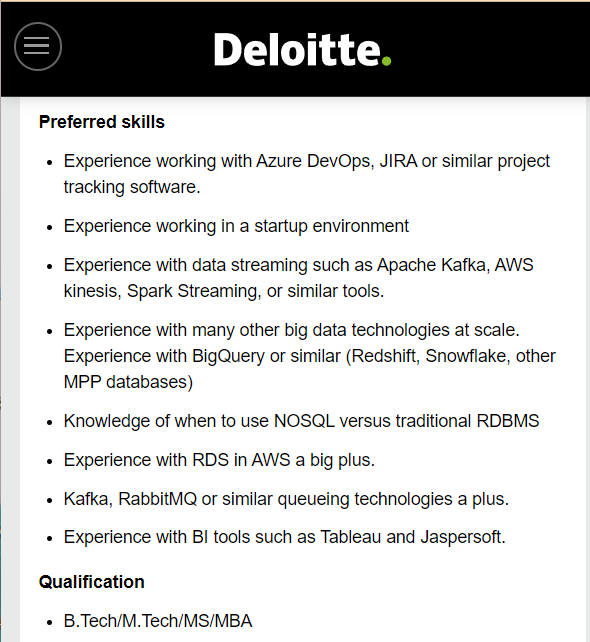

- Experience working with Azure DevOps, JIRA or similar project tracking software.

- Experience working in a startup environment

- Experience with data streaming such as Apache Kafka, AWS kinesis, Spark Streaming, or similar tools.

- Experience with many other big data technologies at scale. Experience with BigQuery or similar (Redshift, Snowflake, other MPP databases)

- Knowledge of when to use NOSQL versus traditional RDBMS

- Experience with RDS in AWS a big plus.

- Kafka, RabbitMQ or similar queueing technologies a plus.

- Experience with BI tools such as Tableau and Jaspersoft.

- B.Tech/M.Tech/MS/MBA

Upload Your Resume to get hired Soon:- Click here

To Know More Details About this job Opportunity click the “Apply Now” Link Below.

How to Apply:- Deloitte Off-campus drive (Please Apply before Expire date of the link)

Method 1:- You can apply through the career page of Deloitte.

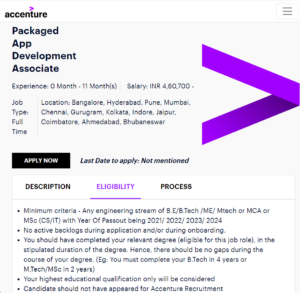

Accenture Mass Hiring For Freshers:- Apply Now

Method 2:- You can apply through the direct link. which has been Provided.

To Apply Through Direct link:-

Step 1:- Click below Apply Now link.

Step 2:– You will be redirected to the career page of Deloitte.

Step 3:– Fill in all required details in the form for which job you are applying:

Step 4:- Click on Submit Button.

Step 5:- Wait for a few days you will get a response from Deloitte soon regarding your interview Process

Deloitte Recruitment 2023-Hiring Data Engineer -| BE/B-Tech/ME/M-Tech/MS/MBA | Multiple | 4.5LPA*

Build A Professional Resume for free

Join Our Social Network For Updates

| 🔔 Official Telegram channel:- W3hiring | Join Now |

| 💼Graduates Jobs for BBA, MBA, BA, MA, B.Com, M.Com, Any Graduate and Diploma | Join Now |

| 💼IT Experienced Jobs | Join Now |

| 👉 Aptitude Exam Discussion | Join Now |

| 👉 Facebook page:- W3hiring | Join Now |

| 👉 Instagram page:- W3hirirng | Join Now |

| 👉 Linkedin Page:- W3hiring | Join Now |

For Regular Off–Campus Job, Updates Join:- Click Here

Top Offcampus Drives. Recently going on: Click Here

😊 Thanks for visiting our Website. You will get a better opportunity soon. Best Of Luck. 👍